How Transistors Power the CPU: Function, Evolution, and Future Technologies?

You’ll learn how they are used in different parts of the CPU, how the number of transistors has grown over time, the problems that come with using so many of them, and the new kinds of transistors being developed for future computers.Catalog

Figure 1. Transistor in CPU

What Transistors Do in a CPU?

Transistors are the basic components that make digital computing possible. In modern processors, especially CPUs, they act as ultra-fast switches that control how current flows through a circuit. This on-and-off switching represents binary values, 1s and 0s which form the language of computing. Before transistors, vacuum tubes were used, but they were large, slow, and consumed too much power. Transistors changed everything.

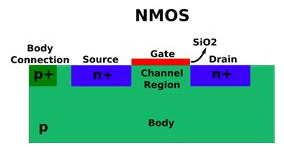

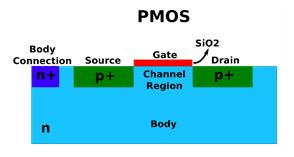

Today, CPUs mostly use a type called the MOSFET (Metal-Oxide-Semiconductor Field-Effect Transistor), which is efficient even at nanometer-scale sizes. MOSFETs come in two types: nMOS and pMOS.

• nMOS turns on when a positive voltage is applied to its gate, allowing current to pass.

Figure 2. nMOS Diagram

• pMOS works in the opposite way, it activates with a low or negative gate voltage. Many combine both into CMOS circuits, which are highly efficient because they only use power when switching states. This quality makes them ideal for high-speed, high-density processing.

Figure 3. pMOS Diagram

Transistors in CPU Architecture

Every part of the CPU, like the Arithmetic Logic Unit (ALU), Control Unit (CU), registers, and internal connections, is built from circuits made of transistors. When a CPU gets an instruction, transistors take care of it from start to finish: decoding the instruction, sending control signals, getting the right data, doing the calculation, and storing the result. All of this happens in billionths of a second. Logic gates (made of transistors) decide what to do based on input signals, while other transistor circuits (like flip-flops) hold onto data for short periods.

Figure 4. Block Diagram of CPU Architecture

Transistors in the ALU (Arithmetic Logic Unit)

The ALU handles arithmetic and logic operations such as addition, subtraction, comparisons, and bitwise logic. These operations are performed by logic gates (AND, OR, XOR, etc.), which are built from groups of transistors.

For example, a full-adder, used in binary addition, consists of dozens of transistors and is replicated many times across the ALU to handle 32-bit or 64-bit inputs simultaneously. Many optimize these arrangements using techniques like carry-lookahead logic to reduce delays and improve throughput. Since the ALU is one of the most frequently accessed components in computation-heavy workloads, its performance depends on how well its transistor layout minimizes latency and power usage.

Transistors in the Control Unit (CU)

The Control Unit is responsible for managing instruction flow inside the CPU. It decodes instructions and sends signals to the right parts of the processor to carry them out. These operations are controlled by networks of transistors arranged in logic circuits.

Timing is very important. Transistor-based flip-flops produce synchronized clock signals that keep everything in step. As CPUs become more advanced with techniques like pipelining and out-of-order execution, the control logic becomes more complex. It must handle features like branch prediction and error detection, which depend on precise, reliable transistor behavior.

Transistors in Registers and Cache Memory

Registers hold data temporarily during processing. They’re built from flip-flops, each containing several transistors. These bistable circuits keep a bit of data stable until a new value replaces it. This makes registers ideal for fast access to frequently used data or instructions.

Cache memory, especially L1 and L2, is built using SRAM (Static RAM), where each bit is stored using six transistors. These transistors must be carefully tuned to balance speed, power use, and resistance to interference. Even minor variations in voltage or leakage across billions of transistors can cause delays or data corruption. That’s why transistor quality is important for both speed and stability.

Evolution of Transistor Counts in CPUs

|

CPU

Model |

Release

Year |

Transistor

Count |

Process

Node |

Description |

|

Intel

4004 |

1971 |

2,300 |

10

µm |

First

commercial microprocessor |

|

Intel

8086 |

1978 |

29,000 |

3

µm |

Basis

for x86 architecture |

|

Intel

Pentium |

1993 |

3.1

million |

800

nm |

Superscalar

architecture |

|

Intel

Core i7-920 |

2008 |

731

million |

45

nm |

Introduced

Nehalem microarchitecture |

|

AMD

Ryzen 9 5950X |

2020 |

4.15

billion |

7

nm |

16-core

consumer desktop CPU |

|

AMD

Threadripper 3990X |

2020 |

39.5

billion |

7

nm (multi-chiplet) |

64-core

HEDT processor |

|

Apple

M1 Ultra |

2022 |

114

billion |

5

nm |

High

transistor count via chip interconnect |

Why More Transistors Mean Better Performance?

At the most basic level, each transistor in a CPU serves as a binary switch. It can be either on or off, representing a 1 or a 0 in binary code. Transistors are combined to create logic gates, which in turn form circuits that perform calculations, store data, and make decisions. Increasing the number of transistors in a processor opens up several performance advantages:

• More Complex Circuits: With more transistors, they can design more sophisticated processing units. For example, they can add additional cores, improve branch prediction units, and integrate larger arithmetic units for handling complex instructions more efficiently.

• Greater Parallelism: A larger transistor budget allows for more execution units to operate simultaneously. This means the CPU can process multiple instructions or threads at the same time, which enhances multitasking and parallel computing performance.

• Larger Caches: More transistors enable the inclusion of larger and more advanced cache memory. Bigger caches help store frequently accessed data closer to the processor, reducing latency and improving throughput by avoiding slower main memory access.

• Enhanced Power Management: Extra transistors allow the integration of fine-grained power control circuits. These circuits can shut down inactive sections of the CPU or dynamically adjust voltage and frequency based on workload, improving energy efficiency without sacrificing performance.

• On-Chip Integration: Additional transistors support the integration of formerly separate components like memory controllers, graphics units, and AI accelerators, directly onto the CPU die. This reduces communication delay and boosts performance for specific workloads.

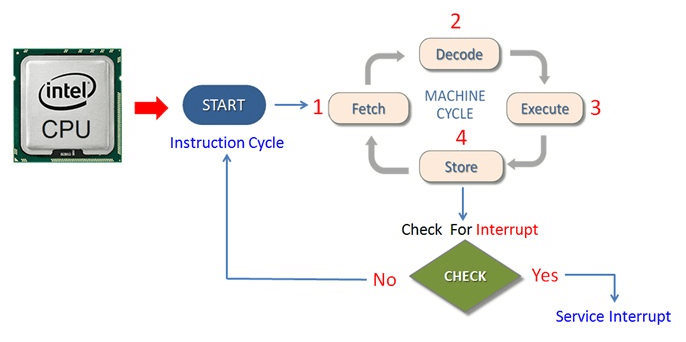

How the CPU Processes Data?

The CPU carries out tasks by following a systematic sequence known as the fetch-decode-execute cycle. During each phase of this loop, countless transistors operate together to manage control signals, shift logic states, and perform calculations. These tiny switches make it possible for the CPU to complete operations with incredible speed and accuracy.

Figure 5. Diagram of the Fetch-Decode-Execute Cycle

1. Fetch

The cycle begins when the control unit collects the next instruction from memory. This instruction resides at the location specified by the program counter (PC), which tracks the CPU's current position in the instruction stream. The instruction is then moved into the instruction register (IR) for further processing. Transistors within the memory and control circuits act like switches and amplifiers, enabling the instruction to be fetched quickly and reliably.

2. Decode

Once fetched, the instruction is passed to the instruction decoder, which translates the binary opcode and determines what operation the CPU should carry out such as performing arithmetic, logic, transferring data, or altering control flow. Transistors in the control unit activate appropriate internal routes, allowing components like registers, buses, and logic blocks to respond accordingly. This entire decoding process relies on transistor networks and logic gates that generate the necessary control signals.

3. Execute

In the execution stage, the CPU performs the operation specified. For computations, the Arithmetic Logic Unit (ALU) handles the work. Built from layers of logic gates and transistors, the ALU performs tasks like addition, subtraction, logical comparisons, and bitwise operations (e.g., AND, OR, XOR). Input data from registers, immediate values, or memory is routed through these transistor circuits with precise timing, enabling fast and efficient execution.

4. Store

After the operation, the result is saved either in a register or in memory. Once again, transistors are important for directing data flow and storing the result without errors. Components like flip-flops and SRAM cells depend on transistor states to reliably hold binary information, ensuring that the output is retained accurately for the next steps.

5. Increment

Finally, the program counter is updated to prepare for the next instruction. In simple sequences, this involves incrementing the address by a fixed value. In cases involving jumps or branches, the PC is reassigned a new address based on instruction outcomes. These updates are managed by control logic made of transistors, which evaluate conditions and generate signals to guide the program’s flow.

Transistor Challenges in Modern CPU Design

• Leakage and Power Drain

Tiny transistors can leak current even when turned off, mainly due to quantum effects. This idle leakage increases power consumption. To reduce wasted energy, use techniques like power gating (disabling unused parts), DVFS (adjusting voltage and frequency), and clock gating (pausing inactive circuits).

• Heat Generation

Densely packed transistors create localized hot spots. Without effective cooling, these can slow performance or cause permanent damage. Modern CPUs counter this with temperature sensors, automatic throttling, and cooling systems like heat spreaders, vapor chambers, or liquid cooling.

• Aging

Transistors degrade over the years due to effects such as metal migration and insulation breakdown. This aging can reduce performance or cause failures. Build in safety margins and implement error-correction systems to ensure reliable, long-term operation.

• Slower Interconnects

While transistors continue to shrink, the wires connecting them don’t scale down as well. These interconnects resist electrical flow and introduce signal delays. This slowdown can be mitigated by reorganizing signal paths and inserting buffers to speed up communication.

• Lithography and Fabrication Limits

Traditional photolithography struggles to define features smaller than the light it uses, causing edge distortions and defects. Extreme Ultraviolet (EUV) lithography helps solve this, but it's expensive and technically demanding, driving up manufacturing costs.

• Balancing Speed, Power, and Heat

CPUs must deliver speed without consuming too much power or overheating, a tough trade-off, especially in mobile and data center applications. Innovations like dark silicon (shutting off unused areas), adiabatic computing (low-energy logic), and hardware accelerators improve energy efficiency while preserving performance.

Advanced Transistor Technologies

As traditional flat (planar) transistors reach their physical limits, new and more advanced designs are being developed. These new types of transistors help make chips faster, smaller, and more efficient.

FinFETs

FinFETs are one of the most widely used advanced transistor designs today. Instead of being flat like older transistors, FinFETs have a thin vertical structure shaped like a fin sticking out of the surface of the chip. The part that controls the electrical current, called the gate, wraps around this fin on three sides. This wraparound structure gives the gate more control over the flow of electricity, which helps reduce unwanted leakage and makes the transistor more reliable. Because of their better performance and lower power use, FinFETs are now used in many smartphones, laptops, and other modern electronics. They first appeared in 22nm chip technologies and have been in scaling down to even smaller sizes.

Gate-All-Around (GAA) Transistors

GAA transistors are an improved version of FinFETs. While FinFETs wrap the gate around three sides of the channel, GAA transistors go one step further: the gate completely surrounds the channel on all sides. This "all-around" control makes it even easier to manage the flow of electricity and reduce power loss. GAA transistors often use a design called "nanosheets" or "nanowires," where the channel is split into thin layers or wires, and the gate wraps around each one. This allows to fine-tune performance and power usage more precisely than ever before. GAA technology is expected to be a key part of chips built with 3-nanometer and smaller processes, making future devices faster and more energy efficient.

Carbon Nanotube and Graphene Transistors

Carbon nanotubes are tiny cylinders made of carbon atoms, with incredible electrical and thermal properties. They can switch on and off faster than silicon and can be made much smaller, allowing for more transistors to fit in the same space. Graphene is a super-thin sheet of carbon, just one atom thick. It's extremely strong, flexible, and conducts electricity very efficiently. These materials could lead to faster, smaller, and cooler-running chips. However, building transistors with nanotubes or graphene is very difficult because the manufacturing process needs to be extremely precise. Even the smallest mistake can ruin the tiny structures.

Quantum Transistors

Quantum transistors work very differently from traditional ones. Instead of using regular electrical bits that are either 0 or 1, they use qubits, quantum bits that can be 0, 1, or both at the same time thanks to a strange property called superposition. They can also be entangled, meaning the state of one qubit can depend on the state of another, no matter how far apart they are. Because of this, quantum transistors can process massive amounts of information in parallel, something that regular computers can’t do. This makes them perfect for tasks like breaking encryption, simulating molecules, or solving complex mathematical problems.

Neuromorphic Transistors

Neuromorphic transistors are designed to behave like the neurons and synapses. In the brain, neurons send signals to each other across tiny gaps called synapses. Neuromorphic transistors try to copy this behavior using electronic components. These transistors are used in neuromorphic computing, which is a new type of computing aimed at handling tasks that involve learning, pattern recognition, and decision-making. For example, neuromorphic chips can be used in artificial intelligence systems that recognize images, process speech, or learn from data in time.

Conclusion

Transistors make everything in a CPU work. They quickly turn on and off to help the computer do math, make decisions, and move data. As more transistors are added to chips, CPUs get faster and more powerful but they also use more energy and get hotter. To fix these problems, use new designs like FinFETs and GAA, and even test new materials like carbon nanotubes and graphene. Some new transistors are even made to act like brain cells. These changes help computers stay fast, efficient, and ready for future challenges.

About us

ALLELCO LIMITED

Read more

Quick inquiry

Please send an inquiry, we will respond immediately.

Frequently Asked Questions [FAQ]

1. Why does transistor size matter in CPUs?

Smaller transistors mean more can fit on a chip, improving speed and power efficiency. They also enable higher performance per watt and support complex features like AI acceleration.

2. What’s the difference between CPU and GPU transistors?

CPU transistors are optimized for general-purpose, serial tasks, while GPU transistors focus on parallel processing, with many smaller cores for handling graphics and AI workloads efficiently.

3. How do transistors affect CPU clock speed?

Transistors must switch on and off quickly for a CPU to reach high clock speeds. Faster switching transistors directly enable higher frequencies and better performance.

4. What causes transistor failure in CPUs?

Common causes include heat stress, electromigration, voltage spikes, and insulation breakdown over time. These reduce switching accuracy and can lead to permanent chip failure.

5. Can transistors be repaired in a CPU?

No, transistors inside CPUs are not repairable. If too many fail or degrade, the entire chip’s performance suffers, and the only solution is replacement.

Popular Posts

-

Complex Instruction Set Computers: How They Changed Computing?

on April 18th 147749

-

USB-C Pinout and Features

on April 18th 111898

-

Using Xilinx Unified Simulation Primitives: A Comprehensive Guide to FPGA Design and Simulation

on April 18th 111349

-

Power Supply Voltages in Electronics: Meaning of VCC, VDD, VEE, VSS, and GND

on April 18th 83713

-

RJ45 Connector Guide: Pinout, Wiring, Cable Types, and Uses

on January 1th 79502

-

The Ultimate Guide to Wire Color Codes in Modern Electrical Systems

The way our electrical systems use colors isn’t just for looks. Each wire color now indicates a specific function, making it easier to identify and handle electrical components correctly during ins...on January 1th 66866

-

Quality (Q) Factor: Equations and Applications

The quality factor, or 'Q', is important when checking how well inductors and resonators work in electronic systems that use radio frequencies (RF). 'Q' measures how well a circuit minimizes energy...on January 1th 63004

-

Purge Valve Guide: Function, Symptoms, Testing, and Replacement for Optimal Engine Performance

The purge valve is a key part of a car’s system that helps keep the air clean by managing fuel vapors before they can escape into the atmosphere. This not only helps the environment by reducing pol...on January 1th 62934

-

Achieving Peak Performance with the Maximum Power Transfer Theorem

The Maximum Power Transfer Theorem explains how energy from a source, such as a battery or generator, flows to a connected load. It shows the exact condition where the load receives the most power....on January 1th 54074

-

A23 Battery Specifications and Compatibility

The A23 battery is a small, cylinder-shaped battery with high voltage. Also called 23A, 23AE, or MN21, it runs at 12 volts and much higher than AA or AAA batteries. Its special design make...on January 1th 52087